OverheadMemoryManager error while uploading VM from Fusion to vSphere 6

Thursday, July 2, 2015

at

10:35 AM

| Posted by

Jared Valentine

I tried uploading a virtual machine from my MacBook Pro running VMware Fusion Pro 7 to my vSphere environment today. Didn't work. I'm connected to the vCenter Server, and after selecting "Upload to Server", I can select the vCenter Server, then the ESXi host, and finally the datastore.

Doesn't work.

Here's the error:

Error:

Error processing attribute "type" with value "OverheadMemoryManager"

while parsing MoRef for ManagedObject of type vim.OverheadMemoryManager

at line 7, column 3236

while parsing property "overheadMemoryManager" of static type OverheadMemoryManager

while parsing serialized DataObject of type vim.ServiceInstanceContent

error parsing Any with xsiType ServiceContent

at line 7, column 33

while parsing return value of type vim.ServiceInstanceContent, version vim.version.version10

at line 7, column 0

while parsing SOAP body

at line 6, column 0

(snip)

I eventually found this via Google, but it wasn't much help:

- https://communities.vmware.com/thread/510512

I did find a workaround: Instead of uploading to server from Fusion to vCenter Server, connect Fusion directly to an ESXi host bypassing vCenter completely. Now, when you upload to server, select the host directly, then the datastore, and next, next, next, next, ok, finish (or whatever it is) and it will work.

As of 7/2/2015, yes Fusion 7 Pro is patched/updated to the latest version, as is the vCenter Server Appliance (6.0.0 + patch1).

|

0

comments

|

![]()

Freenas losing NFS shares after deleting a pool

Tuesday, June 9, 2015

at

2:12 PM

| Posted by

Jared Valentine

Had a fun experience today. The home lab has (at least one) Freenas 9.3 storage server configured with two separate volumes. One volume is shared via NFS to my VMware vSphere lab environment, while the other volume is used for general smb/cifs duties.

After running out of storage on the general-use volume, I decided to play "musical data" and shuffle things around with the main goal of adding larger/newer/additional drives. After backing up the data, I deleted the general-use volume. The vSphere environment immediately went into a panic, with sirens and red lights flashing everywhere. (okay, maybe no sirens or lights, but it was NOT happy)

I knew the issue was in the Freenas realm because it all came crashing down when I deleted the general-use volume. While I was fairly confident that I had not deleted the NFS shares, they were now missing from the configuration! My guess is that the file sharing configuration was saved on that general-use volume - and I eventually tracked it down.

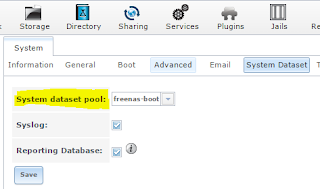

Freenas has a "System Dataset" as shown here:

Unfortunately for me, the default volume for the System dataset pool was my initially configured on the general-use volume. I believe that Freenas recently added the ability to use the "boot volume" as the System dataset pool, which is great! (Just wish I would have known a little sooner).

After changing my system dataset pool to freenas-boot, I re-configured my NFS exports and the vSphere environment sprang back to life.

Not dissing Freenas at all... just posting my experiences. I think Freenas is the bees knees and will continue using it for the foreesable future.

After running out of storage on the general-use volume, I decided to play "musical data" and shuffle things around with the main goal of adding larger/newer/additional drives. After backing up the data, I deleted the general-use volume. The vSphere environment immediately went into a panic, with sirens and red lights flashing everywhere. (okay, maybe no sirens or lights, but it was NOT happy)

I knew the issue was in the Freenas realm because it all came crashing down when I deleted the general-use volume. While I was fairly confident that I had not deleted the NFS shares, they were now missing from the configuration! My guess is that the file sharing configuration was saved on that general-use volume - and I eventually tracked it down.

Freenas has a "System Dataset" as shown here:

Unfortunately for me, the default volume for the System dataset pool was my initially configured on the general-use volume. I believe that Freenas recently added the ability to use the "boot volume" as the System dataset pool, which is great! (Just wish I would have known a little sooner).

After changing my system dataset pool to freenas-boot, I re-configured my NFS exports and the vSphere environment sprang back to life.

Not dissing Freenas at all... just posting my experiences. I think Freenas is the bees knees and will continue using it for the foreesable future.

|

0

comments

|

![]()

Motorola AP8232 running 5.5.0.0-173506X

Tuesday, June 2, 2015

at

9:08 PM

| Posted by

Jared Valentine

I purchased handful of Motorola AP8232's on eBay for my home network. "as-is, no returns". These don't boot up like all the other Motorola AP's I've worked with, nor are they running a familiar version of WiNG.

These are running BootOS 5.5.0.0-173506X and won't actually let you login to the standard CLI. Most Motorola APs use 19.2k for the serial console, but these ones are running 115.2k. You can use the standard "reset/factoryDefault" login to set them to factory defaults, but that won't help. After a few errors and timouts, it'll dump you to a either a boot# or diag> prompt. You can even get to a linux root shell through the "service start-shell" command!

My guess is that this firmware is an engineering build and you can do all sorts of interesting things. I'm not interested in getting that in-depth with this AP. All I really wanted was a handful of enterprise-grade 802.11ac access points.

After a little bit of hacking around, I finally figured out how to get it to run production WiNG code! I'll save the how-to for another blog post. I just wanted to get this out there in case anyone else has similar APs and wants help getting them up and running.

Truncated serial console output from the engineering build, along with the failed/timed out login:

[ 85.322215] ge1 { autoneg'ed 1Gb/s, autoneg'ed full duplex, Cu }

Waiting for system initialization to complete.............................[ 167.852645] CCB:15:MROUTER Po rt ge1 detected on Vlan 1

.

process "/usr/sbin/snmpd -f" started

Please press Enter to activate this console.

ap8232-A3632C login: admin

Password:

Unable to connect to configuration-manager: Resource temporarily unavailable

Login incorrect

ap8232-A3632C login: admin

Password:

Login timed ou

Please press Enter to activate this console.

ap8232-A3632C login: admin

Password:

Unable to connect to configuration-manager: Resource temporarily unavailable

Welcome to CLI

Unable to connect to configuration-manager, executing limited diagnostic shell

==== WARNING ====

The CLI process has not initialized.

Please exit this shell and try again.

If this problem persists then this shell

provides a limited subset of commands

to allow you to restore the system to

a working state. Please use "help" or "?"

to see a list of available commands.

diag >set

boot Boot commands

delete Deletes specified file from the system.

exit Exit from the CLI

help Description of the interactive help system

logout Exit from the CLI

reload Halt and perform a warm reboot

service Service Commands

show Show running system information

upgrade Upgrade firmware image

These are running BootOS 5.5.0.0-173506X and won't actually let you login to the standard CLI. Most Motorola APs use 19.2k for the serial console, but these ones are running 115.2k. You can use the standard "reset/factoryDefault" login to set them to factory defaults, but that won't help. After a few errors and timouts, it'll dump you to a either a boot# or diag> prompt. You can even get to a linux root shell through the "service start-shell" command!

My guess is that this firmware is an engineering build and you can do all sorts of interesting things. I'm not interested in getting that in-depth with this AP. All I really wanted was a handful of enterprise-grade 802.11ac access points.

After a little bit of hacking around, I finally figured out how to get it to run production WiNG code! I'll save the how-to for another blog post. I just wanted to get this out there in case anyone else has similar APs and wants help getting them up and running.

Truncated serial console output from the engineering build, along with the failed/timed out login:

[ 85.322215] ge1 { autoneg'ed 1Gb/s, autoneg'ed full duplex, Cu }

Waiting for system initialization to complete.............................[ 167.852645] CCB:15:MROUTER Po rt ge1 detected on Vlan 1

.

process "/usr/sbin/snmpd -f" started

Please press Enter to activate this console.

ap8232-A3632C login: admin

Password:

Unable to connect to configuration-manager: Resource temporarily unavailable

Login incorrect

ap8232-A3632C login: admin

Password:

Login timed ou

Please press Enter to activate this console.

ap8232-A3632C login: admin

Password:

Unable to connect to configuration-manager: Resource temporarily unavailable

Welcome to CLI

Unable to connect to configuration-manager, executing limited diagnostic shell

==== WARNING ====

The CLI process has not initialized.

Please exit this shell and try again.

If this problem persists then this shell

provides a limited subset of commands

to allow you to restore the system to

a working state. Please use "help" or "?"

to see a list of available commands.

diag >set

boot Boot commands

delete Deletes specified file from the system.

exit Exit from the CLI

help Description of the interactive help system

logout Exit from the CLI

reload Halt and perform a warm reboot

service Service Commands

show Show running system information

upgrade Upgrade firmware image

|

1 comments

|

![]()

Restoring a Time Machine backup from a Drobo Mini

Monday, June 30, 2014

at

11:26 AM

| Posted by

Jared Valentine

Time Machine is great, for the most part. You turn it on and it keeps stuff backed-up. The full system restoration part? Not so easy... at least for my Macbook Pro.

tl;dr = USB 2.0 cable ftw

Here's what I tried and the outcomes:

Thunderbolt: Nope. When you boot up in recovery mode (Command-R), it won't see the Drobo Mini.

USB 3.0: Nope, it only partially works. I could get the recovery process to 4% or 5% and then it would hang indefinitely. How do you know if you're using USB 3.0? The inside of the connector will be blue, and the Drobo-side of the cable connector will have a hump.

USB 2.0/1.0: Works great. Yes, it's 2014, but who cares? When it comes to a full system restore, reliability trumps performance every time. I was able to get a full system restore with just one try using an old USB cable.

|

0

comments

|

![]()

8GB DDR3 DIMMs on an Intel x58 Motherboard? Yep!

Sunday, March 9, 2014

at

11:11 AM

| Posted by

Jared Valentine

After the great RAM fiasco of 2014, I decided to test all of my RAM - even the extra sticks sitting in the drawer.

One of my test systems is a Gigabyte GA-EX58-UD5 motherboard. My understanding is that the x58 chipset supports up to 6x4GB DDR3 memory sticks, for a total of 24GB RAM.

Without thinking, I popped in a pair of 8GB DIMMs to test. Lo and behold, the system found the RAM and it tested just fine! Unfortunately, this motherboard still seems to have a "maximum" limit of 24GB total system memory. Still nice to know that there's a little more flexibility in memory selection on that platform. At least in this case, yes, the x58 chipset is capable of addressing 8GB DIMMs.

One of my test systems is a Gigabyte GA-EX58-UD5 motherboard. My understanding is that the x58 chipset supports up to 6x4GB DDR3 memory sticks, for a total of 24GB RAM.

Without thinking, I popped in a pair of 8GB DIMMs to test. Lo and behold, the system found the RAM and it tested just fine! Unfortunately, this motherboard still seems to have a "maximum" limit of 24GB total system memory. Still nice to know that there's a little more flexibility in memory selection on that platform. At least in this case, yes, the x58 chipset is capable of addressing 8GB DIMMs.

|

0

comments

|

![]()

Memory Errors in Memtest86+ and MemTest86 with SMP enabled

Tuesday, March 4, 2014

at

2:34 PM

| Posted by

Jared Valentine

Blogging because someone out there is probably banging their heads against the wall just like I did today. I've had some "interesting" stability problems on my main desktop, an Asus P9X79 Deluxe motherboard, Intel i7-4930k CPU, and lots of g.skill 4GB sticks (32gb total).

I've had memory go bad on me before, so I figured that would be a good place to start. I checked the basics (timing, voltage, etc.) and they were all configured properly in the BIOS.

I had a few copies (and various versions) of Memtest86+ and MemTest86 burned to USB sticks. I can't remember which one I started with, but both of them started spraying errors after a few tests. I went down to a single memory stick and still had the errors. I swapped the stick around with other sticks. Still no luck. All 8 sticks were erroring out. At this point I was pretty concerned because that could mean I had motherboard or CPU problems.

I quickly grabbed new/current versions of both utilities and tried them out. One of them ran overnight without any issues, and the other failed as soon as I got to test 3... very perplexing. I fiddled with timing, with ram slots, and still couldn't figure it out - although I did start seeing a pattern. The test(s) that passed were all single-CPU. The tests that failed were all SMP tests.

After a little research, I ran across this gem of information. Essentially, there are some motherboard+cpu combinations out there that exhibit CPU register corruption when used with certain versions of Memtest. This is the reason why the single CPU tests ran overnight just fine.

With the stability problems, I wasn't happy with only testing a single CPU (in case my problem was CPU-related). The recommendation is to use SMP with Round Robin.

I'm feeling a little better about my system now, at least as far as RAM is concerned. I have a round robin test running now and will let that go for another 24 hours... but so far so good.

I've had memory go bad on me before, so I figured that would be a good place to start. I checked the basics (timing, voltage, etc.) and they were all configured properly in the BIOS.

I had a few copies (and various versions) of Memtest86+ and MemTest86 burned to USB sticks. I can't remember which one I started with, but both of them started spraying errors after a few tests. I went down to a single memory stick and still had the errors. I swapped the stick around with other sticks. Still no luck. All 8 sticks were erroring out. At this point I was pretty concerned because that could mean I had motherboard or CPU problems.

I quickly grabbed new/current versions of both utilities and tried them out. One of them ran overnight without any issues, and the other failed as soon as I got to test 3... very perplexing. I fiddled with timing, with ram slots, and still couldn't figure it out - although I did start seeing a pattern. The test(s) that passed were all single-CPU. The tests that failed were all SMP tests.

After a little research, I ran across this gem of information. Essentially, there are some motherboard+cpu combinations out there that exhibit CPU register corruption when used with certain versions of Memtest. This is the reason why the single CPU tests ran overnight just fine.

With the stability problems, I wasn't happy with only testing a single CPU (in case my problem was CPU-related). The recommendation is to use SMP with Round Robin.

"the work-around implemented was to change the default CPU selection mode to round robin. In this mode only one CPU is used at a time, but after each test the CPU in use is rotated. So all CPUs will still get used, but only after a longer period of time."

I'm feeling a little better about my system now, at least as far as RAM is concerned. I have a round robin test running now and will let that go for another 24 hours... but so far so good.

Posted In

memtest

|

2

comments

|

![]()

Adventures in the Home Lab: Tracking a Missing WiFi Device (iPad)

Tuesday, February 18, 2014

at

10:13 PM

| Posted by

Jared Valentine

My 7yr old has been pretty bummed lately. He lost his iPad after our last trip to grandma's house. It's been about a month or so and we still haven't found it. It bugged me enough to do something about it today. I offered the kids $10 if they could find the iPad. Nope, that didn't do it. Time to move up the stack.

I logged into my Palo Alto Networks firewall and checked the object I had created for this iPad. With that I had the IP address and MAC address. Out of curiosity, I checked the traffic log to see when it was last online. Lo-and-behold, the thing is online...today...right now!

At this point, I'm pretty stoked. This means the iPad is still in the house somewhere! (and super-impressed that it's still checking-in on wifi after weeks of being missing). Now, to find it.

I fired up Backtrack Linux with with an Alfa USB 802.11b/g adapter - but it couldn't see the iPad's MAC address. The wireless controller reported that the iPad was connected to an AP using 802.11a/5ghz. Unfortunately, the Alfa USB adapter only supports 802.11b/g.

I shut down the 5Ghz radios on the APs, which forced the iPad to connect to the b/g radio instead. Now the Alfa wifi adapter could see the iPad's wireless MAC address! From here, it was a game of hide-and-seek. I started upstairs and worked my way around the house, waving the wifi adapter around while watching the "power levels" reported by airmon-ng. I eventually worked my way to the basement where I found stronger power levels. After a loop around the basement, I came to a point where the power levels were screaming at me. Sure enough, the iPad was right there, hiding in plain sight.

Time to put "find my iphone" on this iPad so we don't have to play this game again. Maybe I'll setup triangulation on the wifi APs too. :)

I logged into my Palo Alto Networks firewall and checked the object I had created for this iPad. With that I had the IP address and MAC address. Out of curiosity, I checked the traffic log to see when it was last online. Lo-and-behold, the thing is online...today...right now!

At this point, I'm pretty stoked. This means the iPad is still in the house somewhere! (and super-impressed that it's still checking-in on wifi after weeks of being missing). Now, to find it.

I fired up Backtrack Linux with with an Alfa USB 802.11b/g adapter - but it couldn't see the iPad's MAC address. The wireless controller reported that the iPad was connected to an AP using 802.11a/5ghz. Unfortunately, the Alfa USB adapter only supports 802.11b/g.

I shut down the 5Ghz radios on the APs, which forced the iPad to connect to the b/g radio instead. Now the Alfa wifi adapter could see the iPad's wireless MAC address! From here, it was a game of hide-and-seek. I started upstairs and worked my way around the house, waving the wifi adapter around while watching the "power levels" reported by airmon-ng. I eventually worked my way to the basement where I found stronger power levels. After a loop around the basement, I came to a point where the power levels were screaming at me. Sure enough, the iPad was right there, hiding in plain sight.

Time to put "find my iphone" on this iPad so we don't have to play this game again. Maybe I'll setup triangulation on the wifi APs too. :)

Posted In

Backtrack,

iPad,

Palo Alto Networks,

Wireless

|

0

comments

|

![]()

Subscribe to:

Posts (Atom)